At the moment, people seem to believe there’s a “bubble” in seed-stage technology funding. Many limited partner investors in VC funds I’ve spoken with have raised the concern and related topics seem popular on Quora (see here, here, and here). However, I’ve examined the data and it argues pretty strongly against a widespread seed-stage bubble.

Rather, I think the increased attention that top startups attract these days induces availability bias. Because Y Combinator and superangels generate pretty intense media coverage, people read more frequently about the few big investments in seed-stage startups. They confuse the true frequency of high valuations with the amount of coverage. Of course, they never read about all the other seed-stage startups that don’t get high valuations.

But if you look at the data on the aggregate amount of seed funding and the average deal size, I think it’s very hard to argue for a general seed-stage bubble. At worst, there may be a very localized bubble centered around consumer Internet startups based in the Bay Area.

First, look at the amount of seed funding by angels over the last nine years, as reported by the Center for Venture Research. I calculated the amount for each year by multiplying the reported total amount of funding by the reported percentage going to seed and early stage deals. (Note: for some reason the CVR didn’t report the percentage in 2004, so I interpolated that data).

As you can see, the amount of seed funding by angels in 2009-20010 was down by half from its level in 2004-2006. Hard to have a bubble when you’re only investing 50% of the dollars you were at the recent peak. But perhaps it’s a pricing issue and angels are pumping more dollars into each startup. While the CVR doesn’t break down the average investment amount at each stage, we can calculate the average investment amount across all stages and use it as a rough index for what is probably going on at the seed and early stage (the index of 100 corresponds to a $436K investment).

The amount invested in each startup in 2010 was down 35% from its 2006 peak. Now, the investment amount is not the same as the valuation. However, for a variety of reasons (anchoring on historical ownership, capitalization table management, and price equilibrium for the marginal startup), I doubt angels have radically changed the percentage of a company they try to own. So deal size shifts should be a good proxy for valuation shifts.

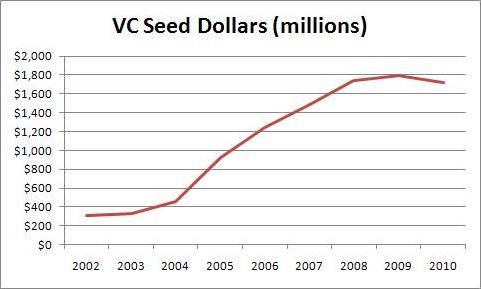

Now, you might think that VC moves in the seed stage market could be a factor. Probably not, for two reasons. First, VCs account for a much smaller share of the seed stage market. Second, what gets counted as the seed stage in the VC data isn’t what most of us think of as seed stage investments. Check out the seed dollar chart and the average seed investment data from the National Venture Capital Association.

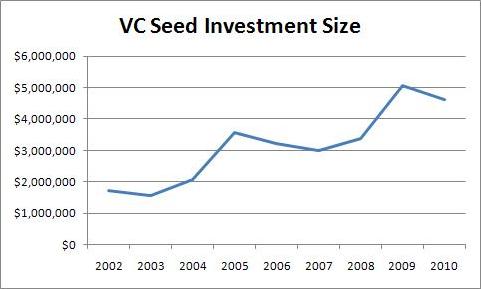

Notice that amount of seed funding by VCs has remained flat for the last three years. Moreover, angels invest dollars in the seed stage at a rate of 3:1 compared to VCs. So VCs probably aren’t contributing to a widespread seed bubble. But the story takes a strange twist if you look at the average size of VCs’ seed stage investments.

The size has increased since 2007. But look at the absolute level! $4M+ seed rounds? I’m starting to think that “seed” does not mean the same thing to VCs as it does to angels and entrepreneurs. Obviously, VCs cannot be affecting what I think of as the seed round very much. However, they could be generating the impression of a bubble by enabling a few “mega-seed” deals. VCs did 373 seed deals in 2010 while angels did around 20,000 (NVCA and CVR data, respectively).

The last factor we have to account for is the superangels. Most of them are not members of the NVCA. However, they probably aren’t counted by the CVR surveys of individual angels and angel groups either. ChubbyBrain has a list of the superangels that seems pretty complete; I can’t think of anyone I consider a superangel who isn’t on it. Of the 16, there are known fund sizes for 13. Two of them (Felcis and and SoftTech VC) are members of the NVCA and thus included in that data. The remaining 11 total $253M.

Now, there are probably some smaller, lesser known superangels not on this list. However, many on the list will not invest all their dollars in a single year and some will invest dollars in follow-on rounds past the seed stage. So I’m confident that $253M is a generous estimate of the superangel dollars that go into the seed stage each year. That’s only about 3% of angels and VCs combined.

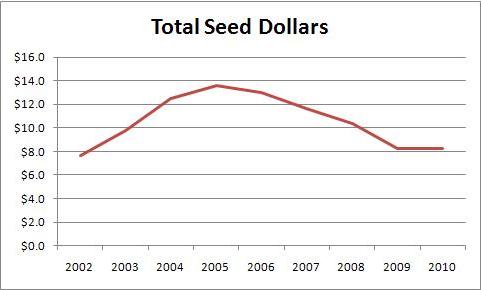

Just to really drive the point home, here’s a graph of all seed dollars, assuming superangels did $253M per year in 2009 and 2010. Seed funding is down $5.4B or 40% from it’s peak in 2005! So I don’t believe there’s a bubble.

(The spreadsheet with all my data is here.)